Spiking Neural Networks: A Revolution in Artificial Intelligence

Spiking Neural Networks – the content:

Introduction

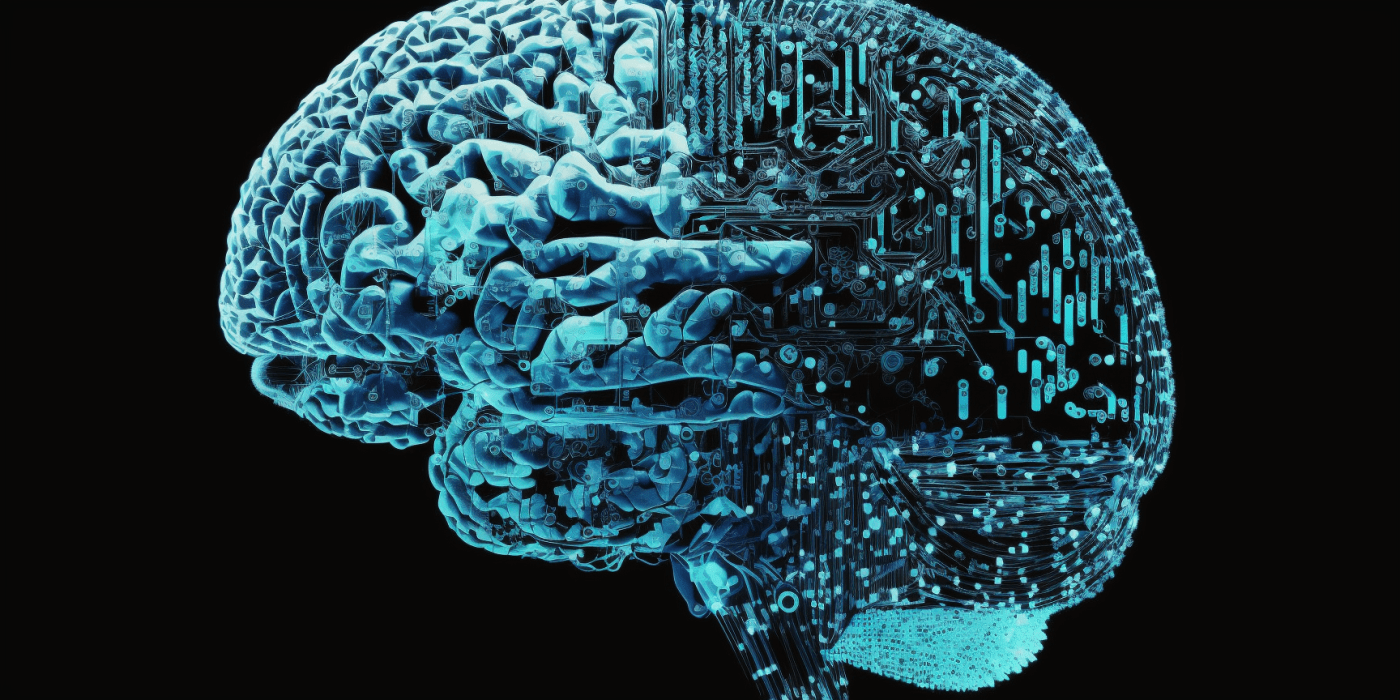

The field of artificial intelligence has been revolutionized by the development of Spiking Neural Networks (SNNs). These networks are designed to mimic the behavior of biological neurons, which communicate with each other through electrical impulses, or spikes. Unlike traditional artificial neural networks (ANNs), which rely on continuous input and output signals, SNNs are event-driven and only respond to spikes in their input signals.

While SNNs have been around for several decades, recent advances in hardware and software have made them more efficient and practical for real-world applications. This has led to a surge of interest in SNNs in academic and industrial research, with applications ranging from robotics and autonomous vehicles to speech and image recognition.

In this article, we will provide an overview of SNNs, their structure and function, and their potential for advancing the field of artificial intelligence.

The Structure of Spiking Neural Networks

Spiking Neural Networks consist of multiple layers of neurons, each of which is connected to other neurons through synapses. These synapses are responsible for transmitting electrical impulses, or spikes, between neurons. The strength of these connections is determined by a weight factor, which can be adjusted during the learning process.

In contrast to traditional ANNs, where each neuron computes a weighted sum of its inputs and applies an activation function, SNNs use a spiking activation function that generates a spike when the neuron‘s membrane potential reaches a certain threshold. This spike is then transmitted to other neurons through synapses, and the process repeats.

The structure of SNNs is highly adaptable, with neurons and synapses able to form and dissolve connections dynamically in response to changing input patterns. This plasticity is a key feature of biological neural networks and allows SNNs to learn and adapt to new situations over time.

The Function of Spiking Neural Networks

The primary function of SNNs is to process and classify input signals based on their spatiotemporal patterns. Unlike traditional ANNs, which rely on static input and output signals, SNNs are designed to handle dynamic and variable input signals, such as those generated by sensors or cameras.

When an input signal is received by a neuron, it is integrated over time to produce a membrane potential. If the membrane potential reaches a certain threshold, a spike is generated and transmitted to other neurons through synapses. The pattern of spikes generated by the network is then interpreted by downstream neurons to produce an output signal.

The ability of SNNs to handle spatiotemporal patterns makes them well-suited for tasks such as speech recognition, image processing, and robotic control, where the input signals are dynamic and complex.

Learning in Spiking Neural Networks

Learning in SNNs is achieved through a process known as Spike-Timing-Dependent Plasticity (STDP). This process involves adjusting the weight of synapses based on the relative timing of spikes between neurons.

When a presynaptic neuron generates a spike that arrives at a postsynaptic neuron shortly after the neuron has generated a spike of its own, the weight of the synapse is increased. Conversely, when the presynaptic neuron generates a spike that arrives at the postsynaptic neuron shortly before the neuron generates a spike of its own, the weight of the synapse is decreased.

This process allows SNNs to learn and adapt to new input patterns over time, making them highly effective for tasks such as object recognition and speech processing.

Advantages

There are several advantages to using SNNs over traditional ANNs. One of the key advantages is their ability to handle dynamic and variable input signals, making them well-suited for tasks such as speech recognition and image processing.

SNNs are also highly efficient, requiring fewer computations than traditional ANNs to achieve the same level of accuracy. This is because SNNs only respond to spikes in their input signals, rather than processing continuous signals.

Another advantage of SNNs is their adaptability. The plasticity of SNNs allows them to learn and adapt to new input patterns over time, making them highly effective for tasks that require continuous learning, such as robotic control and autonomous vehicles.

Challenges

While SNNs offer many advantages over traditional ANNs, they also present several challenges. One of the main challenges is the lack of efficient training algorithms for SNNs. Training SNNs requires specialized algorithms that take into account the spatiotemporal nature of spike-based signals.

Another challenge is the lack of hardware support for SNNs. While recent advances in neuromorphic computing have made it possible to implement SNNs on specialized hardware, such hardware is still expensive and not widely available.

Finally, the complexity of SNNs can make them difficult to interpret and debug. Unlike traditional ANNs, which rely on static weights and activations, SNNs are highly dynamic and adaptive, making it difficult to trace the flow of information through the network.

Applications of Spiking Neural Networks

Despite the challenges, SNNs have many potential applications in the field of artificial intelligence. One of the most promising applications is in the field of robotics and autonomous vehicles.

SNNs are well-suited for tasks such as obstacle avoidance, path planning, and object recognition, making them ideal for autonomous vehicles and robotic systems. SNNs are also highly adaptable, allowing them to learn and adapt to new situations over time, making them ideal for real-world applications.

Other potential applications of SNNs include speech and image recognition, natural language processing, and even financial forecasting.

Future Directions in Spiking Neural Networks

The future of SNNs looks bright, with ongoing research focused on developing more efficient hardware and software, as well as new algorithms for training and optimizing SNNs.

One area of research is the development of hybrid networks that combine traditional ANNs with SNNs, allowing for more efficient and accurate processing of dynamic input signals.

Another area of research is the development of more efficient training algorithms for SNNs, such as those based on reinforcement learning or unsupervised learning.

Conclusion

Spiking Neural Networks represent a major step forward in the field of artificial intelligence, offering many advantages over traditional ANNs, including the ability to handle dynamic and variable input signals, high efficiency, and adaptability. While challenges remain, ongoing research is focused on developing new hardware and software, as well as new algorithms for training and optimizing SNNs. The future of SNNs looks bright, with potential applications ranging from robotics and autonomous vehicles to speech and image recognition.

Further Reading

If you are interested in learning more about Spiking Neural Networks, here are some recommended resources:

Get more Infos about SNNs here

Neuron – Advanced AI with Spiking Neurons

Neuron-spiking NNs revolutionize AI by mimicking biological neurons. With synaptic plasticity, they have applications in robotics, image recog., & prediction modeling. Their potential is limitless with researchers constantly exploring ways to optimize & improve them.

NEST – Unveiling Spike-Based Computing

NEST is a spiking neural network simulation tool used in neuroscience, robotics, and machine learning. It has potential to push boundaries in multiple domains, but is limited by scalability and computational efficiency. Research into methods to improve these limitations will ensure further progress in the use of spiking neural networks.

General Neural Simulation System – a Revolution Unveiled

GENESIS is a powerful tool for simulating neural systems, allowing researchers to explore the complexities of brain function. Despite technical challenges, its unique features make it invaluable for neuroscience research.

Brian – Learn more about this Spiking Neural Network

Spiking neural networks are modeled with the Brian simulator, which offers flexibility and simplicity. Its features, advantages and case studies were discussed. Brain Simulator is an ongoing collaboration to understand brain function and disorders.